Our role in the adoption of AI technologies, like ChatGPT

"Resenting a new technology will not halt its progress."

Marshall McLuhan

I’ve been asked a lot of questions about my take – Akhia’s take – on AI, specifically as it relates to the emergence of ChatGPT.

There is a lot being said about this already. Seems like everyone has a take on it and what it could mean.

It could mean the end of critical thinking.

It could eliminate a lot of jobs.

It could move us closer to a universal basic income.

It could create a lot more time for other things.

It could make us rely on machines to think for us.

A lot of these things are already happening.

- Critical thinking? When’s the last time you did your own research (v. using Google)?

- As for a larger fear, around lost jobs … well … yes. This could happen. Just as it has with our world becoming more automated. (Ever use a self-checkout lane?)

- More time for other things? Inventions such as Roomba, Instant Pot … hell, the DVR, have created a lot of time for us. We’ve been down this road before.

- Machines thinking for us? They already do. Oh, I didn’t realize you still used a map, a typewriter and a real address book. Have you memorized every number in your phone?

Fear usually accompanies change or progress. It’s natural to worry about what could happen. But I’ve read and heard that we shouldn’t be afraid of AI as it begins to assimilate into our world.

Be not afrAId

People say you need that human perspective on things. That ability to think and apply. To strategically plan for what’s ahead and the best way to approach it. AI will supplement that – with us acting as an editor and curator to ensure nothing is lost in translation.

I agree with this. We will learn to work with AI and account for it as a new tool in our growing martech stack. As you can see below, we just aren’t there … yet.

(This is a picture I asked DALL-E to draw of someone waiting in a lobby. It’s only mildly disturbing.)

(This is a picture I asked DALL-E to draw of someone waiting in a lobby. It’s only mildly disturbing.)

But I am afraid. (Of more than just that drawing.)

No, not of learning to use and work with AI. I think we will handle that part just fine. We picked up social media pretty quickly (and look how well that turned out!) so I anticipate we will adapt.

No, my fear is … we will like it. A lot.

In order to use AI the way I’m hearing we should – as a tool – we need to have a balanced relationship with it. But MY worry is that as time goes on, it will become a lot easier and a lot faster for us to just plug in a prompt, take what we’re given and roll on.

We know the risks: Where is that info coming from? Who’s vetting it? Who’s saying it’s accurate?

Similar to how we Google things now (look it up, spend most of our time on the first page and either validate our thinking or pull a reference to ‘fit’ our point) we won’t take the time to double check, vet it or consider the source … not to mention find a counterpoint just in case, you know, we may not be right.

How long until we settle into that pattern and the human element becomes completely devalued? Or worse, we aren’t simply using machines anymore – we’re relying on them.

See, that’s the worry with the critical thinking element. It’s not that AI replaces our ability to think critically. It’s that it reshapes what critical thinking actually is. We will accept what is given to us … instead of forming our own thoughts. Something we were well on our way to before ChatGPT arrived on the scene.

Can we help ourselves ... keep thinking for ourselves?

Make no mistake – we will not only exchange a small percentage of critical thinking for time, we will do it willingly. We will do it because even though there may be a long-term risk, we just won’t care. (See Global Warming if you don’t believe me.) We live with the short-term benefit. There is something worse than a long-term risk: knowing what could happen but choosing to do it anyway.

Want proof? Take a minute to read this article from Business Insider:

I outsourced my memory to AI for 3 weeks.

In it, the author talks about how much text we consume on a regular basis and how difficult it is becoming to remember things.

So rather than try to track and remember everything, he tried out an app called Heyday. It’s essentially a browser extension that sees everything you do … and can then recall that info for you when you’re working on a specific topic.

Did it work? According to the author it did. With one issue – but that won’t stop him from continuing to use it:

“… while Heyday was effective at bridging the gap of my limited memory, making research easier, I worry that a reliance on the tool would make my memory even worse. But given the mounting volume of text we read online, perhaps we have already passed the point of no return. The modern world demands that we consume a massive amount of information, and our biological memories simply don't have the ability to remember it all. So instead of fighting a losing battle, an extended hard-drive-esque space like Heyday can be a vital supplement. For me, at least, Heyday is here to stay.”

So what should we do in the short term?

From an agency perspective, the best thing we can do is what we always do – continue to vet the technology and learn how best to use it, when to use it, how to measure it and how to anticipate where it goes next.

Understand that just because we can doesn’t mean we should. Identify the instances where it makes sense to apply the technology and hone it until it’s producing optimal results for our clients.

And most importantly, be transparent about how we’re using it – and when we’re using it. The goal is not to give ‘ghostwriting’ a whole new meaning, but instead, to educate our clients on the opportunity that is ahead.

As individuals? That’s a different story. All of these things apply, of course. However, we also need to think about ‘should’ even if we can. And how does that get applied to those you’re responsible for?

For example, should you let your kids use it? Or is it better to teach them the value of a rough draft to help the thinking process?

I’m not going to make a case for where the line is drawn. If I had had Wikipedia … I never would have cracked one encyclopedia. If Google was what it is, when I was in college, I never would’ve had to go to the library to track down research papers at other campuses. We can’t ignore the fact that the line where what we’re willing to give up and trust machines with has moved a bit – and will likely move more. I’m just saying be aware that there is a line. Right?

The bottom line

Evaluating and understanding ChatGPT, as well as what has come before – and will come after it – is what ultimately does make us human. It’s our responsibility to understand technology and how it gets infused into our world. Only we can decide how we use this technology.

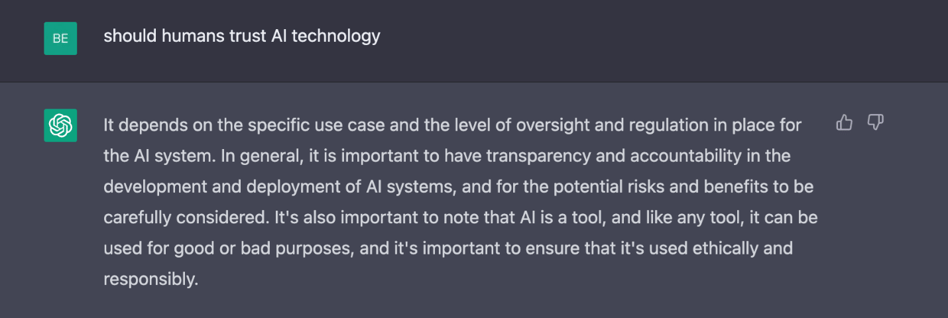

Even AI agrees with that:

Written By Ben Brugler CEO, President

Explore These Topics